Hi.

I’d like to explain what LLM architecture looks like in practice. What actually happens under the hood?

Most of us use AI today. We ask questions, generate code, analyze data. But we rarely stop to think about what’s really underneath it all.

And underneath, there is no self-awareness. There is statistics. There is a collection of components that work together to create the illusion of intelligence. And that is exactly what agent-based system architecture is about.

That’s why, to understand why something works (or doesn’t), you have to look more broadly. Not just at the model, but at the entire system. Because it is always a system, not just a model. LLM architecture in practice means designing the whole setup: from the agent, through memory and tools, to managing context, costs and security.

If we don’t understand this, it’s hard to understand why something works brilliantly… and why something else ends in complete nonsense that sounds very convincing.

Table of Contents

The user, the agent and the LLM – who is actually doing the work?

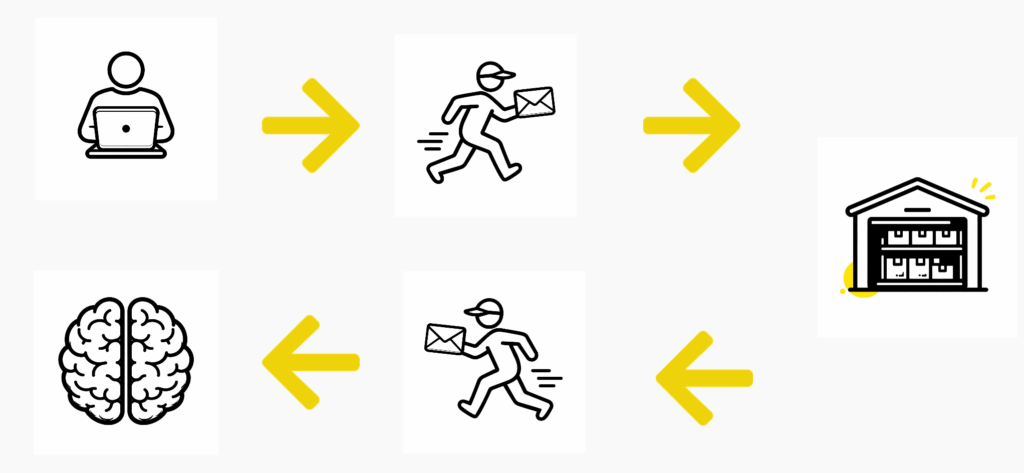

Let’s start with the basic flow.

The agent sounds futuristic, but in practice it’s just conventional code. A script. There is no superintelligence there. It doesn’t analyze the question, it doesn’t reflect on its meaning. It’s only responsible for delivery.

The LLM generates the response. Usually polite, often long. Sometimes it starts with “Great question!”, even if the question was completely off the mark.

However, it has one fundamental flaw: a very short memory.

The model operates exclusively on what it receives as input at a given moment. Every query is a fresh start for it. If it seems like it remembers the previous conversation, it’s only because the agent stores the history and attaches it to subsequent requests.

This is how context is created.

Long-term memory – why the chat “remembers”

If the model doesn’t remember anything, why can we hold a conversation?

Because the agent remembers.

After each response, the agent saves the conversation in so-called long-term memory. This can be something simple like a JSON file, or a vector database in a more complex system. When the next question arrives, the agent:

– goes to storage,

– collects the conversation history,

– appends it to the new prompt,

– sends the whole package to the LLM.

This is how an ever-growing bundle of data is created. Storage grows, the prompt inflates. The longer the conversation, the larger the input sent to the model.

Tools and ping-pong

Agent-based systems are not just chat.

An LLM can be given a list of tools. Each tool has a description: when it can be used and what the invocation should look like.

The model can return an instruction like: “call this tool with these parameters.” The agent executes the instruction, takes the result, and sends it back to the model.

Sometimes this turns into a ping-pong loop:

model → agent → tool → agent → model → …

Sometimes the loop starts spinning endlessly and it’s clear that it’s going nowhere. That’s when it needs to be stopped. And this is where another architectural element comes in.

Token counters – how much it really costs

We pay for everything the model has to read. We pay for everything it generates.

Every word in the context is tokens. Every tool, every previous response is also tokens. That’s why politeness in the age of tokenization is a bit of a luxury.

This is not a detail. It’s a real aspect of designing LLM architecture.

How an LLM “thinks”: from words to probability

An LLM does not understand meanings, only statistical correlations between numbers. The process looks like this:

Tokenization: The text is chopped into pieces (tokens). The model doesn’t see letters, only numerical identifiers of word fragments (for example “broth” split into parts).

Embedding: Each token becomes a point in a multidimensional space (a vector). Words with similar contexts (for example “broth” and “soup”) end up close to each other.

Attention mechanism: This is where the model “understands” context. It analyzes relationships between all words in a sentence, so it knows that when you cook broth, the word “water” refers to a pot, not a kettle.

Prediction: The model does not “answer.” It calculates statistics: which token should most likely come next in a given sequence.

Detokenization: The selected token ID is converted back into readable text.

The key insight: the model does not know what broth physically is. It only knows that after the words “grandma cooked,” in a massive corpus of texts, the vector coordinates corresponding to “broth” appeared with very high probability.

Limited context window

Everything we feed into the model must fit into its context window. And that window is limited and strictly tied to the model.

Into that window try to fit:

– the user prompt,

– conversation history,

– the list of tools,

– additional settings.

With very long conversations, part of the history simply becomes invisible. Additionally, there is the “Lost in the Middle” phenomenon. Models remember the beginning and the end of the context better. What sits in the middle can blur. More is not always better.

Hallucinations – a natural consequence

Hallucinations are not a bug. They are a consequence of the nature of LLMs. The model is supposed to generate the most probable sequence of words. If it doesn’t have complete data, it will still generate something.

This is influenced by:

– model size,

– weight accuracy,

– quantity and quality of training data,

– training duration,

– access to external data,

– prompt quality.

But even with the best parameters, it’s still probabilistic.

Context poisoning

If in one thread I talk about tacos, dips, and cheddar, and later in the same thread I ask for quiz questions, the model may still operate in the world of tacos. This is context poisoning.

Sometimes it’s immediately visible and funny. In technical projects, it can be much less obvious.

Prompt injection and jailbreak

In classical IT, code (instructions) is separated from data (user input).

In LLMs, that separation does not exist. The model sees everything as one sequence of characters with the same priority. It cannot distinguish the creator’s “command” from a user’s “deception.”

Prompt injection is taking control over the logic (overwriting instructions).

Jailbreak is breaking ethical safeguards (forcing prohibited content).

Safeguards are only statistical: we can ask the model to obey us, but a clever prompt can always tilt the probability in the attacker’s favor.

How I work with this in practice

First: I verify.

I don’t treat the model’s output as ultimate truth. Review is essential.

Second: I let it learn from mistakes. The model can generate tests, run them in a loop, and fix its output. But I review the final result.

Third: least privilege applies to LLMs too.

I don’t give it access to everything. Even if it uses a read-only replica, it can still generate output we don’t want to see in the wrong place.

Fourth: feed it knowledge. If I work with a specific technology, I give it documentation, repository rules, and project context. This improves output quality.

Fifth: I clean up context. I open new threads when changing topics. I create summaries. I don’t let the prompt grow uncontrollably.

Why it works — and why it doesn’t

It works because LLMs are great at reproducing language patterns and operating on large amounts of data.

It doesn’t work because:

– it’s statistics, not consciousness,

– there is no grounded reasoning,

– the context window is limited,

– hallucinations are natural,

– context can be poisoned,

– there is no hard boundary between instruction and input.

LLM architecture in practice is the skill of designing a system that takes these limitations into account. For me, it’s simply a tool. Very powerful. Very useful. But still a tool.

And that’s how I treat it.